Vizuara Research

Bootcamps

From foundations to publishing at top-tier venues. We run intensive AI & ML research bootcamps that prepare you to write and submit original research papers.

Publication Venues of Our Bootcamp Students

Featured Research

A curated look at the papers our researchers and students have published at leading AI/ML conferences.

Research Bootcamps

Intensive training programs designed to accelerate your journey from learning AI/ML to publishing original research at top-tier venues.

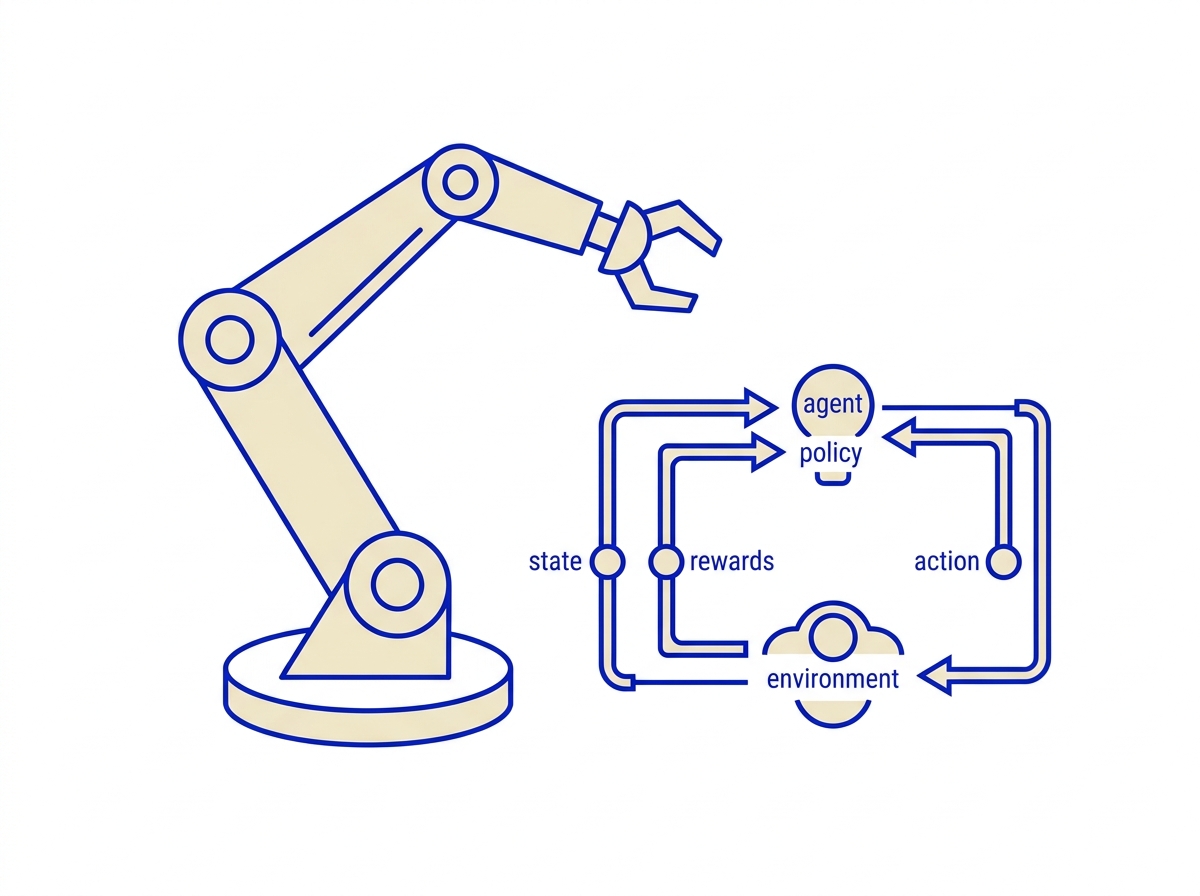

Reinforcement Learning Research Bootcamp

Comprehensive program to write high-quality research papers in Reinforcement Learning.

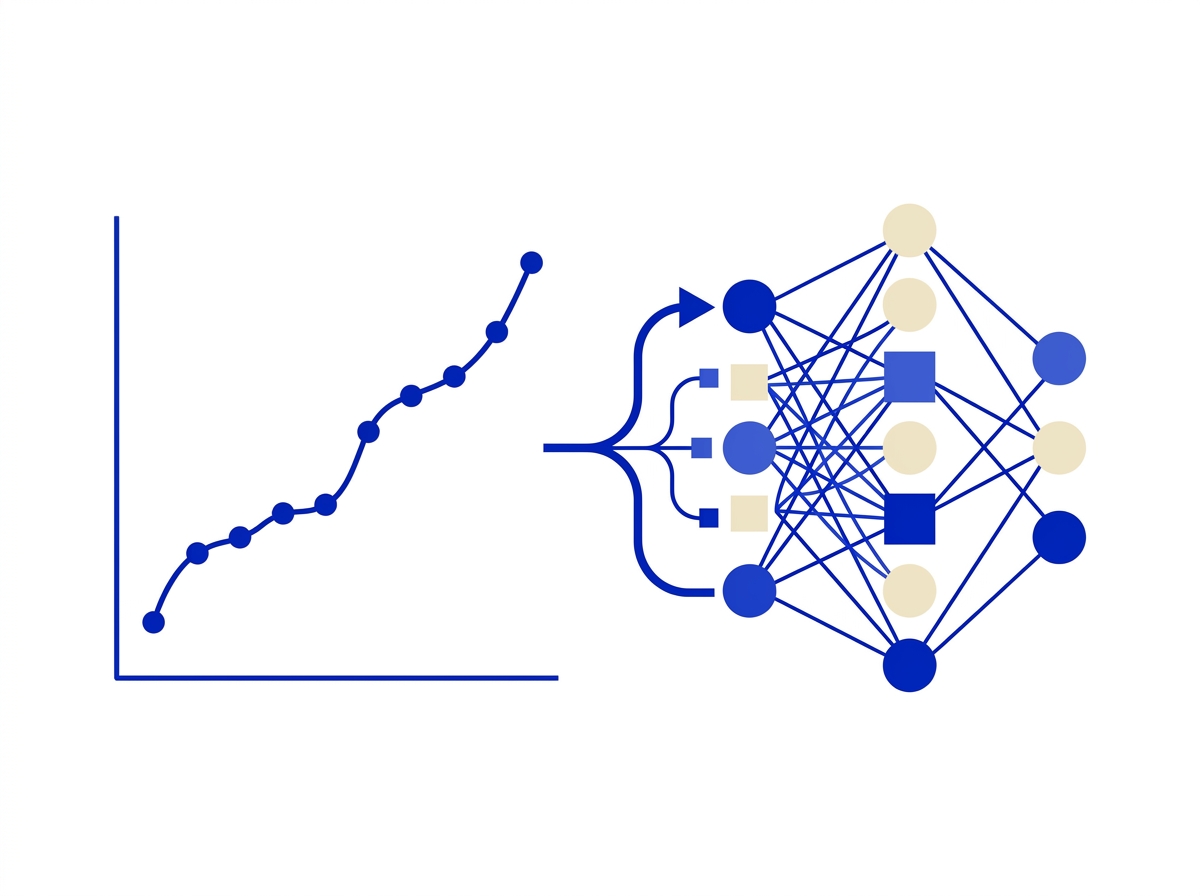

Scientific Machine Learning Bootcamp

PINNs, Scientific Computing, Publication Guidance for real-world physics problems.

ML/DL Research Bootcamp

Deep Learning Architectures, Research Papers, and Industry Applications.

Gen AI Professional Bootcamp

Advanced Model Architectures, Research Methodologies, and Novel Algorithm Development.

AI High School Research Bootcamp

Research Fundamentals, Mentorship, and College Prep for high school students.

Computer Vision Research Bootcamp

Build foundations, work on impactful CV problems, and publish at top-tier venues.

Prathamesh Joshi

Max Planck Institute alum · Generative AI & Scientific ML

Prathamesh brings expertise spanning Generative AI and Scientific Machine Learning, with publications at ICLR Workshops, IEEE conferences, and other top venues. He has mentored students through intensive bootcamps, guiding them toward publications at NeurIPS Workshops, ICLR, JuliaCon, and AAAI Workshops.

Have questions about our programs? Reach out directly.

Email us to book a free 1:1 consultation call.

Areas of Investigation

Each bootcamp has dedicated research tracks. Hover to explore the focus areas across all six programs.

Scientific ML

5 areas

Physics-Informed Neural Networks

Encoding physical laws directly into neural network training for constrained, interpretable predictions.

Universal Differential Equations

Combining mechanistic models with neural networks to discover missing dynamics from data.

Neural ODEs

Continuous-depth models that parameterize dynamics as neural networks for time-series and physics.

Hybrid Models

Blending analytical knowledge with data-driven learning for robust scientific predictions.

GenAI + SciML

Leveraging large language models and generative AI to accelerate scientific ML research.

Reinforcement Learning

6 areas

Fine-tuning LLMs with RL

Using reinforcement learning to fine-tune large language models for specific tasks and alignment.

Aligning SLMs to Human Preferences

Training small language models toward human preferences using RLHF and DPO techniques.

Reasoning LLMs via RL

Developing chain-of-thought and reasoning capabilities in LLMs through RL-driven training.

Agentic Reinforcement Learning

Building autonomous agents that use RL to navigate, plan, and interact with tool environments.

Smart Reward Construction

Designing reward functions that guide RL agents toward desired behaviors without reward hacking.

RL in Robotics

Applying reinforcement learning to robotic manipulation and locomotion using the LeRobot library.

ML / Deep Learning

3 areas

Deep Architectures

Designing and training CNNs, RNNs, Transformers, and other modern deep learning architectures for research.

Transfer & Few-Shot Learning

Adapting pre-trained models to new domains and tasks with minimal labeled data.

Training Acceleration

Optimizers, learning rate schedules, mixed-precision, and distributed training for faster convergence.

Computer Vision

7 areas

Object Detection

Localizing and classifying objects in images using modern detection architectures.

Segmentation

Pixel-level scene understanding with clean contour boundaries and region parsing.

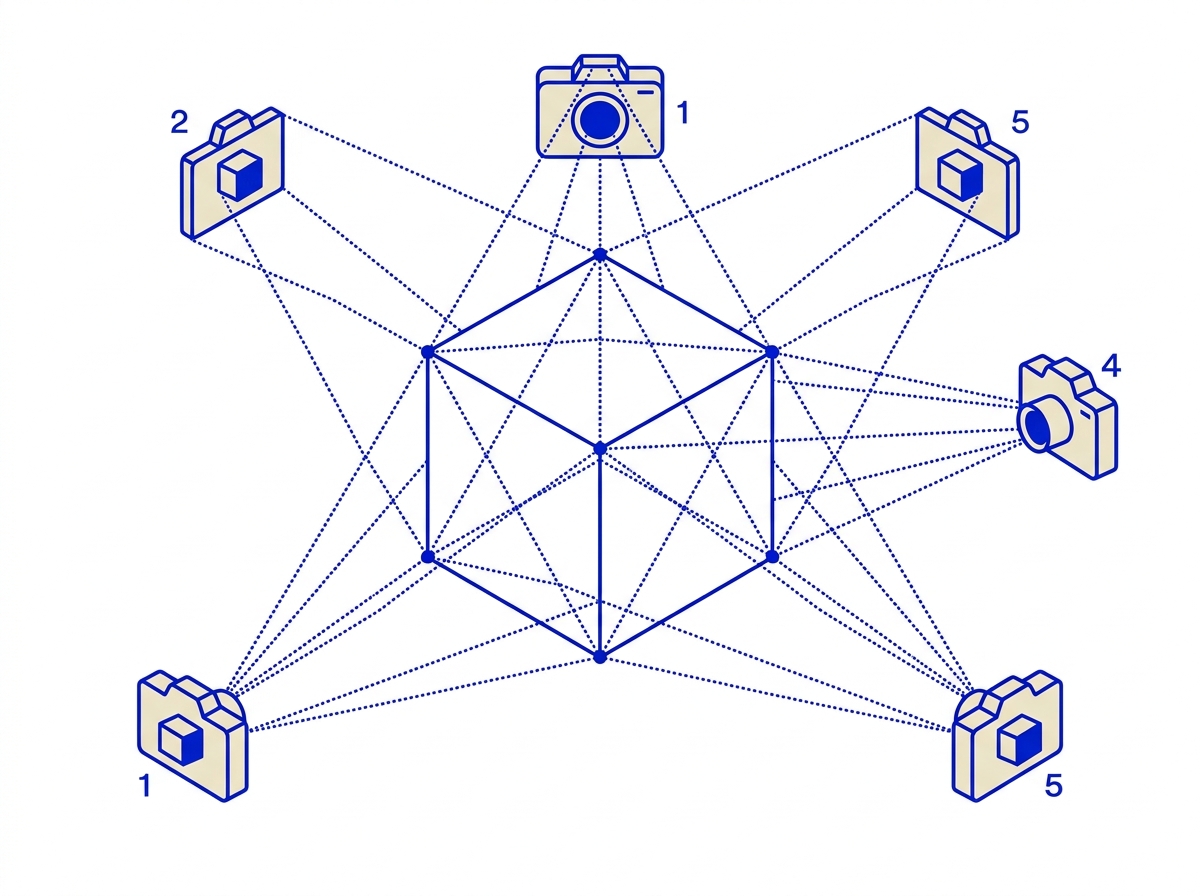

3D Vision

Reconstructing 3D geometry from multi-view images using neural implicit representations.

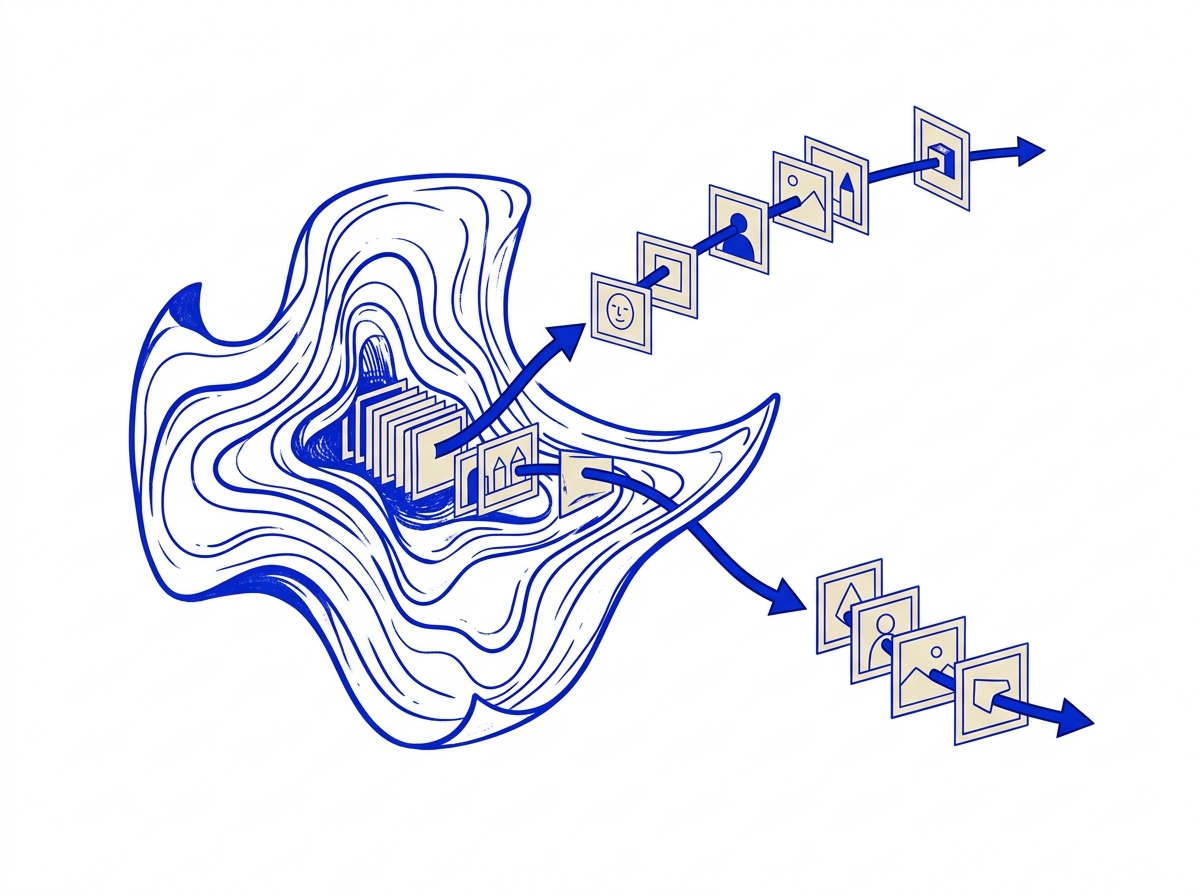

Generative Vision

Diffusion models, GANs, and VAEs for image synthesis, editing, and style transfer.

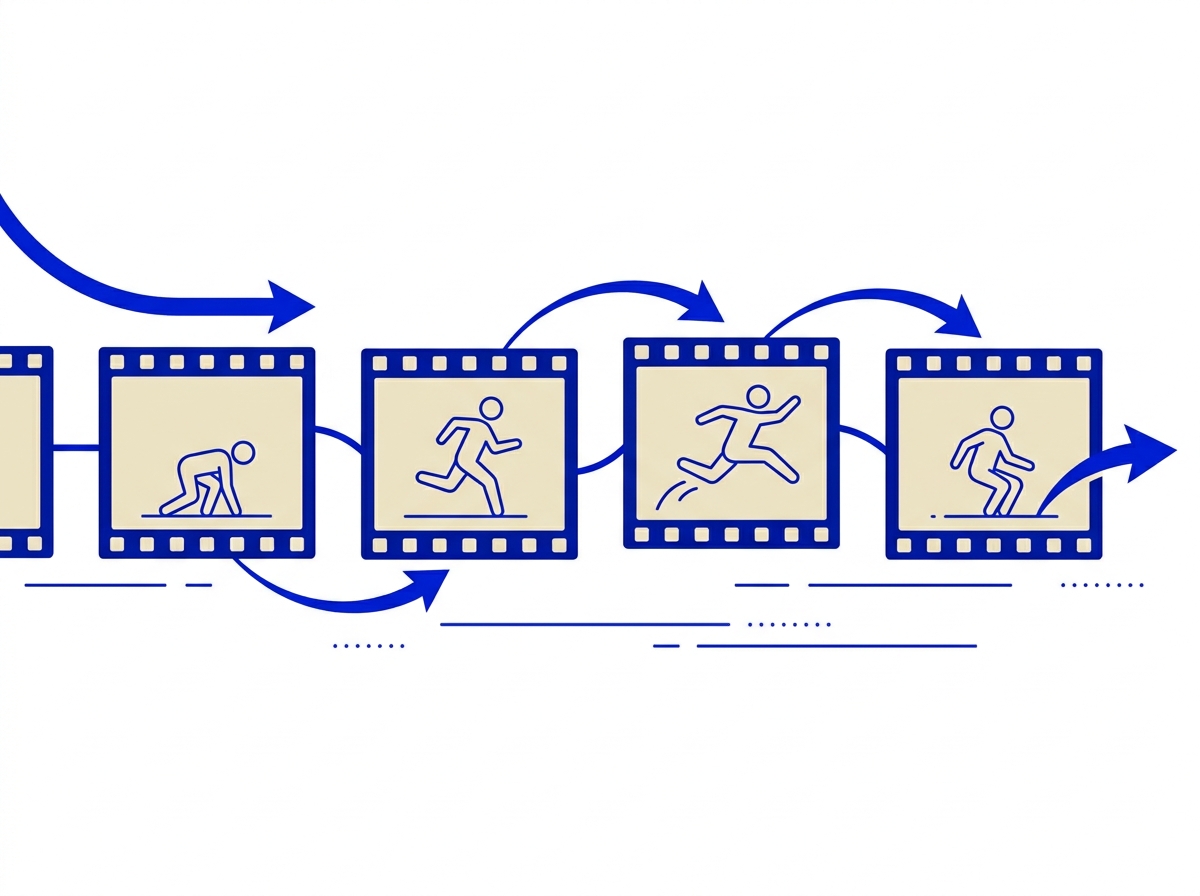

Video Understanding

Temporal modeling, action recognition, and motion estimation across video sequences.

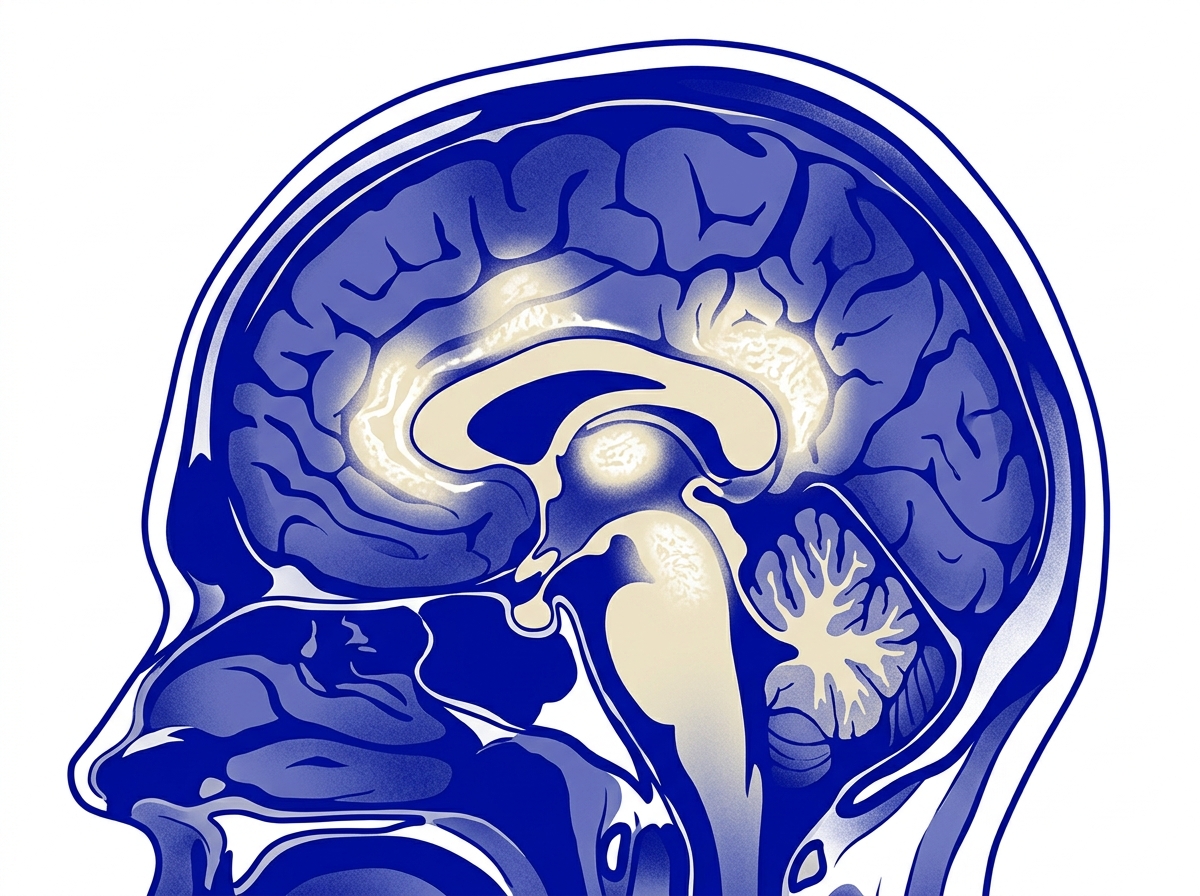

Medical Imaging

AI-driven analysis of medical scans for detection, segmentation, and diagnosis support.

Vision-Language Models

Bridging visual and textual understanding with multimodal transformers and VLMs.

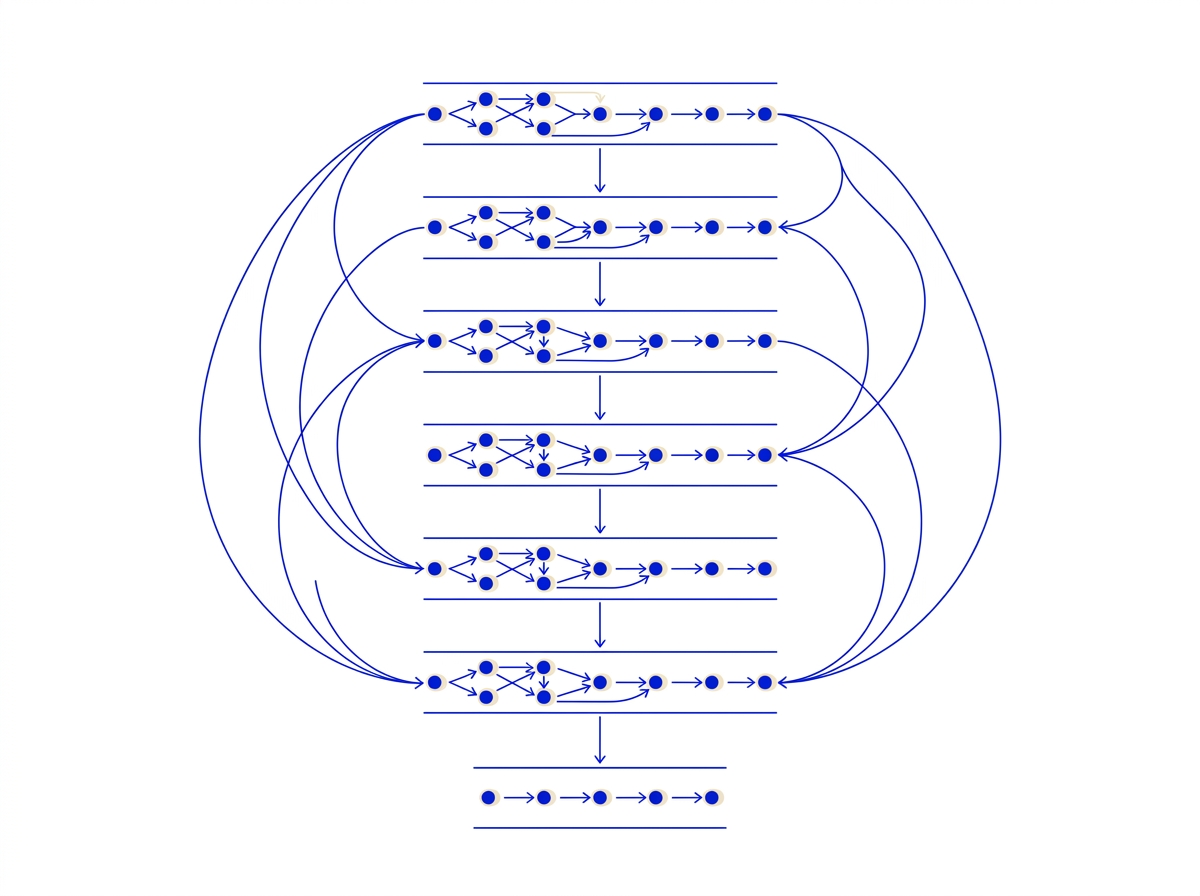

Generative AI

5 areas

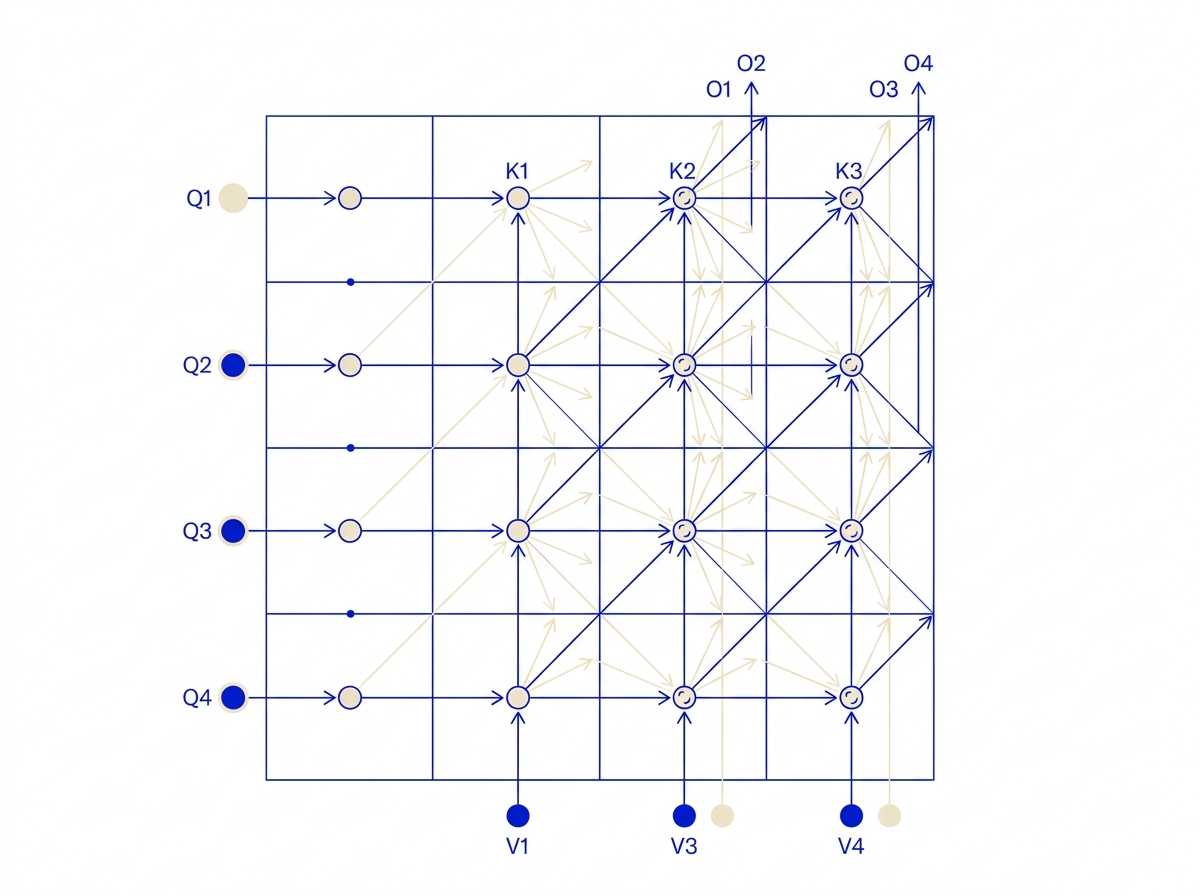

Transformer Architectures

Deep dive into attention mechanisms, positional encodings, and architecture innovations.

Prompt Engineering

Crafting and optimizing prompts for controlled, high-quality LLM outputs.

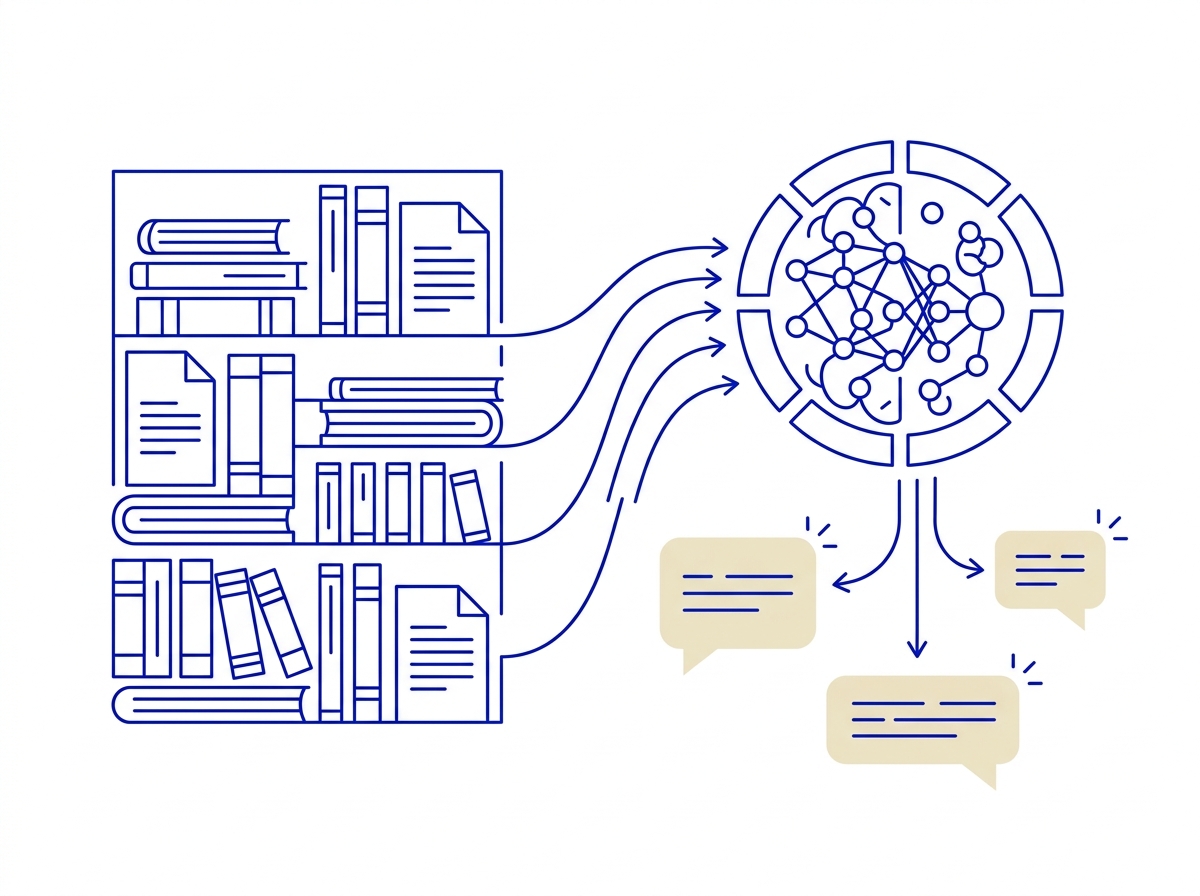

RAG Systems

Combining external knowledge retrieval with LLMs for grounded, factual generation.

AI Agents & Tools

Building autonomous agents that plan, reason, and interact with APIs and external tools.

LLM Evaluation

Systematic evaluation of LLM capabilities across reasoning, safety, and domain tasks.

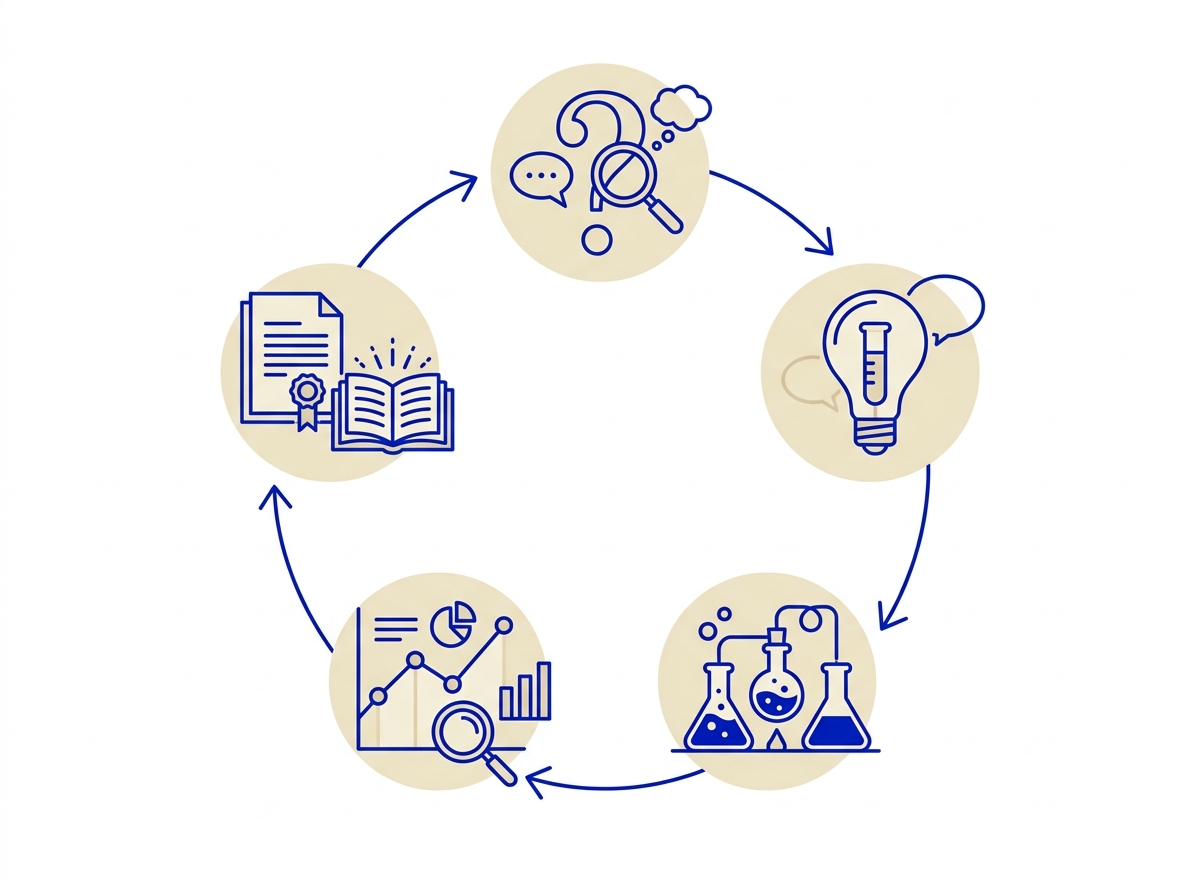

AI High School Research

4 areas

Intro to AI & ML

Foundational concepts in machine learning, neural networks, and data-driven thinking for beginners.

Scientific Method

Learning to formulate hypotheses, design experiments, and analyze results in AI research.

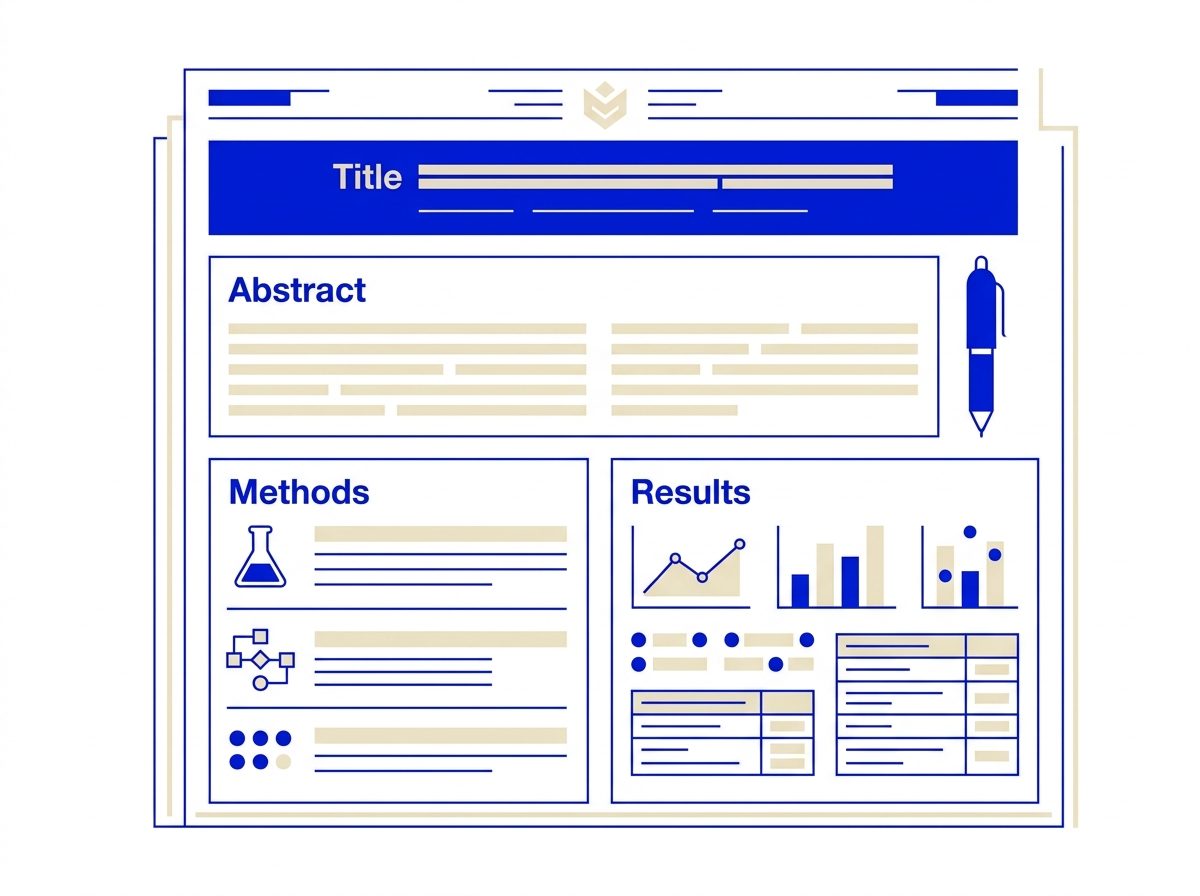

Paper Writing

Structuring abstracts, methods, results, and discussions for publication-ready academic papers.

AI Ethics

Understanding bias, fairness, transparency, and societal impacts of artificial intelligence.

Founded by Researchers

An interdisciplinary team with roots in MIT, Purdue, and IIT Madras.

MIT PhD

MIT PhDDr. Raj Dandekar

Co-founder, Vizuara AI Labs

PhD from MIT, B.Tech from IIT Madras. Dr. Raj specializes in building LLMs from scratch, including DeepSeek-style architectures. His expertise spans AI agents, scientific machine learning, and end-to-end model development.

MIT

MIT IIT Madras

IIT Madras MIT PhD

MIT PhDDr. Sreedath Panat

Co-founder, Vizuara AI Labs

PhD from MIT, B.Tech from IIT Madras. 10+ years of research experience. Dr. Panat brings deep technical expertise from both academia and industry to make complex AI concepts accessible and practical.

MIT

MIT IIT Madras

IIT Madras Purdue PhD

Purdue PhDDr. Rajat Dandekar

Co-founder, Vizuara AI Labs

PhD from Purdue University, B.Tech and M.Tech from IIT Madras. Dr. Rajat brings deep expertise in reinforcement learning and reasoning models, focusing on advanced AI techniques for real-world applications.

Purdue

Purdue IIT Madras

IIT MadrasStories from our researchers

Milestones, acceptances, and moments shared by Vizuara students and alumni on LinkedIn.

In their own words

Reflections from researchers who have been through a Vizuara bootcamp.

Frequently Asked Questions

Everything you need to know about our research bootcamps.

Still have questions?

Contact Us